|

Scientific Paper / Artículo Científico |

|

|

|

|

https://doi.org/10.17163/ings.n35.2026.04 |

|

|

|

pISSN: 1390-650X / eISSN: 1390-860X |

|

|

OPTIMIZING CROWDSOURCED HUMAN COMPUTATION WITH ADAPTIVE INTELLIGENT USER INTERFACES FOR SCALABILITY AND EXPLAINABILITY |

||

|

OPTIMIZACIÓN DE LA COMPUTACIÓN HUMANA MULTITUD CON IUIS ADAPTABLES PARA OBTENER ESCALABILIDAD Y EXPLICACIÓN |

||

|

Received: 24-02-2025, Received after review: 09-07-2025, Accepted: 22-10-2025, Published: 01-01-2026 |

|

Abstract |

Resumen |

|

Intelligent User Interfaces (IUIs) represent a transformative paradigm for advancing crowdsourced and human computation by optimizing task distribution, strengthening human–AI collaboration, and ensuring data integrity. This study presents a case study–driven analysis of an adaptive IUI framework designed to enhance scalability, engagement, and accuracy in large-scale, crowd-based problem-solving. By examining three representative platforms—Amazon Mechanical Turk (MTurk), Zooniverse (a citizen science platform), and AI-assisted medical image analysis in public health—the research investigates the influence of dynamic task allocation, Explainable AI (XAI), and gamification on user participation and task performance. The findings demonstrate that adaptive IUIs improve task accuracy relative to user expertise, reduce completion time as experience increases, and strengthen volunteer retention through gamified elements. Moreover, integrating XAI into AI-assisted medical diagnostics substantially elevates both trust and diagnostic precision. Collectively, these outcomes underscore the scalability, adaptability, and efficacy of IUIs in human computation, offering a comprehensive framework for future advancements in task optimization and explainability. |

Las interfaces de usuario inteligentes (IUI) representan un enfoque transformador para mejorar la computación colectiva y la computación humana, mediante la optimización en la distribución de tareas, el fortalecimiento de la colaboración entre humanos e inteligencia artificial (IA) y la garantía de la seguridad de los datos. Este estudio presenta un análisis basado en estudios de caso sobre una IUI adaptativa diseñada para mejorar la escalabilidad, el compromiso de los usuarios y la precisión en la resolución de problemas a gran escala mediante crowdsourcing. A través del examen de tres plataformas clave —Amazon Mechanical Turk (MTurk), Zooniverse (plataforma de ciencia ciudadana) y un análisis de análisis de imágenes médicas asistido por IA en el ámbito de la salud pública—se evalúa el impacto de la asignación dinámica de tareas, la inteligencia artificial explicable (XAI) y la gamificación sobre la participación de los usuarios y el rendimiento en las tareas. Los resultados indican que las IUI adaptativas mejoran la precisión de las tareas de acuerdo con el nivel de habilidad del usuario, reducen el tiempo de ejecución a medida que los participantes adquieren experiencia y aumentan la retención de voluntarios gracias a los mecanismos de gamificación. Asimismo, la integración de XAI en el diagnóstico médico asistido por IA incrementa de manera significativa tanto los niveles de confianza como la precisión diagnóstica. Estos hallazgos evidencian la escalabilidad, adaptabilidad y eficacia de las IUI en el campo de la computación humana, y ofrecen un marco de referencia para futuros avances en la optimización de tareas y la explicabilidad de los sistemas inteligentes. |

|

Keywords: Intelligent User Interfaces (IUIs), Human Computation, Human–Computer Interaction (HCI), Crowd Computing, Explainable Artificial Intelligence (XAI), Adaptive Task Allocation |

Palabras clave: interfaces de usuario inteligentes (IUI), cómputo humano, interacción humanocomputadora IHC, computación colaborativa, inteligencia artificial explicable (XAI), asignación adaptativa de tareas |

|

1,*College of engineering and computer

science, Jazan University, Jazan, Saudi Arabia Corresponding author ✉: jmartin@jazanu.edu.sa.

Suggested citation: R. J. Martin “Optimizing crowdsourced human computation with adaptive intelligent user interfaces for scalability and explainability,” Ingenius, Revista de Ciencia y Tecnología, N.◦ 35, pp. 50-63, 2026, doi: https://doi.org/10.17163/ings.n35.2026.04. |

|

1. Introduction

Crowd computing and human computation have become increasingly vital in the digital era for executing complex, large-scale tasks that require human intelligence to complement artificial intelligence, such as data labeling and content moderation on platforms like Amazon Mechanical Turk. However, these systems often face challenges related to efficiency, user engagement, and equitable task allocation. Intelligent User Interfaces (IUIs) address these limitations by incorporating adaptive mechanisms such as real-time feedback, expertise-based task assignment, and explainable AI into crowdsourced platforms [1]. By personalizing the user experience and refining task workflows, IUIs enhance scalability, improve user performance, and foster more effective collaboration between humans and AI. One major limitation of current crowd computing platforms lies in their static, one-size-fits-all approach to task assignment, which overlooks individual differences in skill and performance and often compromises output quality. Furthermore, interaction between human users and AI components remains limited, leading to suboptimal task outcomes. Intelligent User Interfaces (IUIs) address these deficiencies through user modeling, real-time performance analysis, and AI-driven feedback, which collectively enable adaptive task allocation and foster more effective collaboration between humans and AI systems [2]. This paper explores the transformative potential of Intelligent User Interfaces (IUIs) to enhance scalability, efficiency, and quality in crowd-based human computation, particularly as these platforms are increasingly employed for complex tasks such as AI model training and disaster response. Through a case study–driven analysis, the paper evaluates the feasibility and impact of adaptive IUIs, without actual system implementation, across four key dimensions: Adaptive Task Distribution, Explainable AI in Human Computation, User Modeling, and Gamification and Security Measures. By examining both general-purpose platforms and specialized domains, these case studies provide a comprehensive assessment of how IUIs can strengthen human–AI collaboration in crowd computing environments. The main objectives of this research are as follows:

1. To determine how User Modeling, Gamification, and Adaptive Task Distribution interact in largescale crowdsourcing environments. 2. To examine how gamification, adaptive task distribution, and user modeling enhance user engagement and task completion in citizen science programs. |

3. To evaluate how Explainable AI (XAI) improves user engagement and trust in specialized domains such as public health.

Three case studies are presented to demonstrate these objectives both independently and synergistically in real-world contexts, offering valuable insights into the scalability, efficiency, and trustworthiness of human computation systems. By focusing on these cases, the paper illustrates the theoretical potential of IUIs to address real-world challenges in crowd computing. The findings derived from the case studies establish a framework for the future development and evaluation of IUI-driven human computation systems, paving the way for the next generation of crowd computing solutions [3]. The remainder of this paper is organized as follows: Chapter 1.1 reviews the relevant literature, focusing on adaptive task distribution, Explainable AI (XAI), user modeling, gamification, and security in human computation systems. Chapter 2 describes the methodologies used to explore these concepts and their implementation through case studies. Chapter 2.5 presents three case studies—Amazon Mechanical Turk (MTurk), Zooniverse, and a public health project involving XAI in medical diagnostics— illustrating the real-world applications of the proposed methods. Chapter 3 discusses the insights derived from these case studies, analyzing how adaptive methodologies improve scalability, engagement, and trust. Finally, Chapter 4 concludes the paper by summarizing the key findings and outlining directions for future research.

1.1. Related Works

This section reviews the current state of research on the use of Intelligent User Interfaces (IUIs) to enhance scalability, collaboration, and user engagement in human computation and crowd computing platforms. The discussion focuses on key areas such as dynamic task allocation, which optimizes task distribution based on real-time user performance, and Explainable AI (XAI), which improves transparency and trust in AI- assisted problem-solving. It also examines the role of gamification in motivating users, the importance of security and privacy, and the ways in which adaptive IUIs strengthen collaboration and scalability. Collectively, these topics provide a comprehensive overview of the current research landscape and highlight opportunities for future investigation.

1.2. Dynamic task allocation in human computation

Schmidbauer et al. [4] investigated human–robot task assignment in industrial environments, demonstrating |

|

that adaptive task sharing (ATS), which allows workers to influence task distribution, enhances satisfaction levels among operators. Although this study emphasized the importance of providing humans with control, it also identified inconsistencies in task allocation, underscoring the need for improved alignment between human preferences and task assignment mechanisms. In contrast, Wen et al. [5] proposed a task allocation framework for wireless sensor networks (WSNs) in edge computing environments. Their emphasis on energy efficiency and reliability through parallel task execution revealed the framework’s potential to significantly reduce energy consumption and execution time. However, limitations related to fault tolerance and dynamic network conditions were noted, suggesting that further research is needed to improve overall system robustness. Faccio et al. [6] expanded task allocation research into the domain of collaborative robotics by introducing a model that adjusts robot speed based on the distance between the robot and the human operator to balance productivity and safety. Their findings demonstrated notable performance improvements but also revealed inherent trade-offs, particularly the difficulty of maintaining safety without compromising productivity. These results underscore the need for real-time adaptability in dynamic work environments. Yuan et al. [7] proposed an Adaptive Task Allocation framework (ATA-HRL) for multi-human, multirobot (MH-MR) teams, employing hierarchical reinforcement learning to enhance task adaptability. Their two-stage approach, comprising Initial Task Assignment (ITA) and Conditional Task Reallocation (CTR), proved effective; however, it also highlighted the critical importance of accurate initial allocations, particularly in environments characterized by high uncertainty. Similarly, Tamali et al. [8] investigated distributed task allocation in multi-robot systems, utilizing a greedy algorithm to optimize task distribution in complex environments. Their simulation-based approach, implemented using the Robot Operating System (ROS), demonstrated notable improvements in task completion efficiency; however, scalability and communication constraints in real-world scenarios were identified as key challenges for future research.

1.3. Explainable AI in crowdsourced problemsolving

The reviewed studies present a diverse set of approaches and insights into crowdsourced evaluation and Explainable AI (XAI), emphasizing user participation and the enhancement of explanation quality through crowdsourcing and selective input techniques. Despite |

their differing objectives, these works share a common emphasis on leveraging human feedback to improve both the interpretability and accuracy of AI models. Both Jain et al. [9] and Kou et al. [10] [11] employed crowdsourcing to enhance AI explanations, although their approaches differed in scope and application. Jain et al. focused on evaluating XAI techniques through a Game with a Purpose (GWAP), which allowed users to rank methods such as LIME and Grad-CAM based on their interpretability. This game-based strategy identified Grad-CAM as the more effective XAI method for image classification. In contrast, Kou et al. [10] developed Crowd Graph, a multimodal knowledge graph framework designed to detect and explain fauxtography in social media posts by integrating textual and visual data. Both studies leveraged the power of crowd participation to generate meaningful feedback and improve system performance. However, a critical limitation in both approaches lies in the reliability of crowdsourced data, underscoring the need for more robust mechanisms to verify and maintain data quality. Kou et al. [11] further examined crowdsourcing through the HC-COVID framework, focusing on the detection and explanation of COVID-19 misinformation. The hierarchical design of HC-COVID, integrating contributions from both expert and non-expert crowd workers, represents an extension of their earlier work. This multi-layered approach substantially enhanced the accuracy of misinformation detection and the quality of explanations. However, like other crowdsourced models, it faces the persistent challenge of bias and inconsistency in non-expert data, highlighting the need for more structured frameworks to ensure reliability and precision. Sawant et al. [12] and Lai et al. [13] addressed bias and subjectivity in AI explanations, particularly in socially sensitive domains such as hate speech detection and user communication behavior. Sawant et al. [12] employed AI-based classification with TabNet to identify hate speech in low-resource languages, including Hindi, and demonstrated how socio-political contexts can introduce bias into annotations. In comparison, Lai et al. [13] developed a selective explanation framework that tailors AI explanations to user preferences, such as relevance and abnormality, thereby enabling more context-aware interactions. Both studies emphasize the importance of aligning AI outputs with human interpretation to reduce bias and improve accuracy. However, while Sawant et al. revealed that socio-political contexts significantly influence AI bias, Lai et al. focused on mitigating cognitive workload and user biases through selective input, underscoring the delicate balance between user engagement and AI autonomy. |

|

A critical comparison across these studies reveals that, while crowdsourcing is an effective approach for enhancing AI explanations, it also introduces substantial challenges related to data quality and bias control. For instance, the reliance on crowdsourced data in Jain et al. [9] and Kou et al. [10] [11] demonstrates that, although such methods can improve system performance, they inherently risk inconsistencies in the quality of human-generated content. Similarly, Sawant et al. [12] and Lai et al. [13] highlighted the susceptibility of AI models that depend on human annotations to various forms of bias, particularly in domains characterized by socio-political sensitivities or subjectivity. Despite these limitations, all studies converge on the conclusion that adaptive and selective frameworks, whether hierarchical models like HC-COVID, game-based evaluations such as Eye into AI, or selective explanation techniques, represent a promising direction toward more transparent and interpretable AI systems.

1.4. Gamification to enhance user engagement

The reviewed studies on gamification across various contexts, from health promotion to employee training and education, demonstrate a shared focus on user engagement, with each applying distinct game elements to influence behavior and outcomes. Despite similarities in their objectives, these works diverge in their methodologies and highlight specific challenges related to sustaining long-term engagement. Zhang et al. [14] and Hellín et al. [15] examined gamification as a strategy for behavior change in health and education, respectively. Zhang et al. developed DMCoach+, a gamified system designed to promote healthy lifestyles through a two-level structure that integrates personal goals and social competition. In contrast, Hellín et al created a gamified learning environment incorporating points, leaderboards, and badges to enhance student motivation in programming courses. Both studies demonstrated that gamification can significantly enhance engagement; however, they also identified critical limitations. Zhang et al. observed that one-way communication with physicians constrained long-term user engagement, while Hellín et al. noted that lower-ranked students could become demotivated by leaderboards. These findings suggest that balancing competition and personalized interaction is essential to maintain long-term engagement in both health and educational contexts. Lu et al. [16] and Bitrián et al. [17] investigated the role of gamification in commercial contexts, focusing on user engagement with brand and mobile applications. Lu et al. integrated the Mechanics-Dynamics-Aesthetics |

(MDA) framework into the Nike Run Club (NRC) app, emphasizing that enjoyment was the most significant driver of user engagement and brand loyalty. In contrast, Bitrián et al. examined how game design elements fulfill psychological needs, finding that achievement-oriented and social-oriented components enhanced engagement by satisfying the needs for competence, autonomy, and relatedness. Both studies demonstrated that gamification increases user engagement but also highlighted the importance of balancing fun and personalization to sustain long-term user interest. Finally, Alfaqiri et al. [18] developed a gamification framework for online training platforms, with a focus on employee engagement. The integration of multiple game elements, such as points, challenges, and leaderboards, mirrored techniques used in educational and commercial contexts. Similarly, Bitrián et al., Alfaqiri et al. found that these elements effectively increased engagement; however, they also noted that the novelty effect tends to diminish over time, echoing concerns raised in other studies regarding the long-term sustainability of gamified systems.

1.5. Security and privacy concerns in crowd computing platforms

Owoh and Singh [19] developed SenseCrypt for mobile crowd-sensing (MCS) applications, integrating a K means algorithm with Certificateless Aggregate Signcryption (CLASC) to manage the labeling of sensitive data and ensure secure transmission. This approach reduced computational costs and communication overhead, making the framework robust against multiple attack vectors, including replay and forgery attacks. However, the framework’s adaptability to broader use cases remains limited, indicating the need for further research to enhance its scalability and practical implementation. In contrast, Li et al. [20] proposed CrowdSFL, which integrates blockchain technology with federated learning to safeguard data in a decentralized manner. This design maintains privacy by keeping data local while employing smart contracts for secure communication. Federated learning significantly reduced privacy risks by avoiding the centralization of sensitive information, distinguishing this approach from SenseCrypt’s signcryption-based method. The integration of a reencryption algorithm based on ElGamal in CrowdSFL added an additional layer of security. Although the outcomes demonstrated improvements in accuracy, security, and computational efficiency, the complexity and higher communication overheads associated with blockchain systems were identified as key challenges. |

|

In comparison, both frameworks offer robust security mechanisms for protecting sensitive data in crowdsourcing environments. However, SenseCrypt focuses primarily on efficient signcryption for mobile sensor data, whereas CrowdSFL emphasizes decentralized privacy preservation through blockchain and federated learning. The communication overheads observed in Li et al.’s [20] work contrast with the computational efficiency demonstrated by Owoh and Singh [19], highlighting the inherent trade-off between decentralization and system complexity. Despite these differences, both studies underscore the continuing need for adaptable and scalable solutions to ensure data security in distributed crowdsourced systems.

1.6. Adaptable IUI for enhancing collaboration and scalability in crowd work

The following studies present several approaches to enhancing collaboration and scalability in human–AI systems and crowd-powered environments. A common thread across these works is the use of adaptive frameworks that dynamically integrate human input, AI processes, and crowdsourced contributions, while each system applies these principles in distinct and contextspecific ways. Siangliulue et al. [21] developed IDEAHOUND, a system designed to enhance large-scale collaborative ideation through real-time semantic modeling. By capturing user interactions on a virtual whiteboard, the system dynamically generates diverse and creative suggestions, thereby fostering idea diversity and collaborative participation. However, the system’s reliance on user-generated clusters, which occasionally lack clarity, highlights the challenge of fully leveraging crowdpowered semantic judgments. In contrast, Abbas et al. [22] developed Crowd of Oz (CoZ), a real-time conversational AI system that integrates synchronous crowdsourcing to handle complex social dialogues, particularly for mental health support. Unlike IDEAHOUND, CoZ emphasizes affective communication in real-time interactions. Although it effectively enhances conversation quality, the system faces challenges in sustaining consistent worker retention and ensuring high-quality responses, underscoring the need for additional training and skill development among crowd workers. Ponti and Seredko [23] also examined human–AI collaboration, focusing on the context of citizen science. Their task allocation framework assigns simpler activities, such as data collection, to citizen participants, while AI systems and domain experts handle more complex processes like data analysis. The study |

highlights how increasing AI capabilities may inadvertently marginalize volunteers, raising concerns about sustaining crowd engagement, a challenge that Siangliulu et al. [21] similarly observed in IDEAHOUND with respect to idea clustering. Building on the theme of citizen-centric systems, Stein and Yazdanpanah [24] introduced C-MAS, a multi-agent system designed to give citizens greater control over decision-making in smart mobility and energy domains. Like CoZ, C-MAS places strong emphasis on privacy, fairness, and transparency, but extends these principles by enabling citizens to actively shape decisions through personal intelligent agents. Nevertheless, the challenge of trust, particularly concerning privacy and ethical decision-making, remains a central issue, reflecting the same need for worker trust observed in CoZ’s affective communication model. Lastly, Gupta et al. [25] developed COHUMAIN, a framework designed to foster collective intelligence in human-AI teams through a socio cognitive architecture. By sharing cognitive resources via transactive memory and attention systems, COHUMAIN seeks to enhance collaborative decision-making and scalability, paralleling the collaborative ideation enabled by IDEAHOUND. However, Gupta et al. identified a persistent challenge of maintaining long-term trust between human and AI collaborators, an issue also observed by Stein and Yazdanpanah [24] in the context of trust between citizens and AI agents. While each of these frameworks successfully advances adaptable collaboration and scalability across different domains, they share common challenges related to trust, participant retention, and the quality of collaborative outcomes. These recurring obstacles underscore the ongoing need for refinement in training, dynamic task allocation, and human–AI integration to achieve truly scalable and effective crowd-powered systems.

2. Materials and Methods

This section presents a theoretical and evaluative framework for assessing the effectiveness of adaptive Intelligent User Interfaces (IUIs) in scalable human computation systems. It is grounded in well-established closed loop adaptive UI principles, where interface behavior continuously adjusts to user context and evolving preferences. In this dynamic feedback model, user actions generate signals that inform the IUI’s adaptive policies, modulating task distribution, personalization, gamification, and explainability modules, in alignment with frameworks applied in AI-driven smart Product–Service Systems (SPSS) [26]. By |

|

synchronizing user behavior with real-time interface responses, this framework provides a robust theoretical foundation for adaptive task distribution and user modeling. This research employs a mixed case study design that integrates theoretical frameworks, such as Activity Theory, the Technology Acceptance Model, and adaptive UI principles, with quantitative empirical data collected from Amazon Mechanical Turk (MTurk), Zooniverse, and a clinical XAI environment. Rather than conducting randomized controlled trials, the study gathered quantitative pilot metrics and analyzed them using the TRIPLE C case study methodology [27]. Performance indicators include task accuracy, completion time, error rates, volunteer retention, diagnostic accuracy, and clinician trust, each supported by appropriate statistical tests to substantiate the findings. Figure 1 illustrates the key layers involved in optimizing human computation environments. The diagram comprises three primarylayers: the Input Layer, consisting of Human Participants, Crowd Tasks, and AI Systems; the Adaptive IUI Layer, which includesmodules such as Dynamic Task Allocation, User Modeling, Explainable AI, Gamification, and Security Measures; and the Outcome Layer, representing key results including Scalability, Collaboration, Engagement, and Data Integrity. The flow of tasks and data originates in the Input Layer, where human participants and AI systems interact with assigned tasks, progresses through the Adaptive IUI Layer for optimization, and culminates in enhanced system performance within the Outcome Layer. This framework illustrates how adaptive IUIs can be leveraged to enhance collaboration and scalability in crowd sourced environments. From the perspective of Activity Theory a well-established interpretative framework in case study research, the operator (subject) interacts with tasks (object) through the IUI (tool). The adaptive interface mediates this interaction via real-time feedback loops, thereby enhancing task performance and overall system efficiency [28]. |

Figure 1. Conceptual Framework of Adaptive IUI for Scalable Human Computation

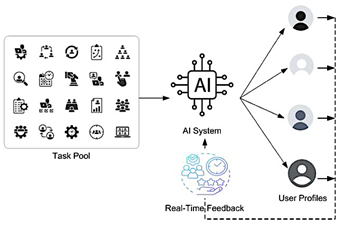

2.1.Adaptive Task Distribution

From the perspective of Activity Theory, the operator interacts with the interface to accomplish specific goals, with the IUI dynamically mediating this relationship through real-time feedback. Adaptive task distribution enhances the efficiency of human computation in crowdsourcing by allocating tasks according to user skills, performance, and expertise. Figure 2 illustrates this model: tasks from the pool are assigned via an AI system that leverages real-time feedback to optimize future allocations. Comparable approaches have demonstrated effectiveness in human–robot collaboration, such as Schmidbauer et al. adaptive tasksharing framework based on user capabilities and preferences [29], as well as in adaptive learning systems, where task reassignment improves performance according to prior outcomes [30]. This theoretical model will be examined through case studies in domains such as disaster response, data labeling, and urban planning to assess scalability, practicality, and performance in large-scale human computation. |

|

Figure 2. Adaptive Task Distribution

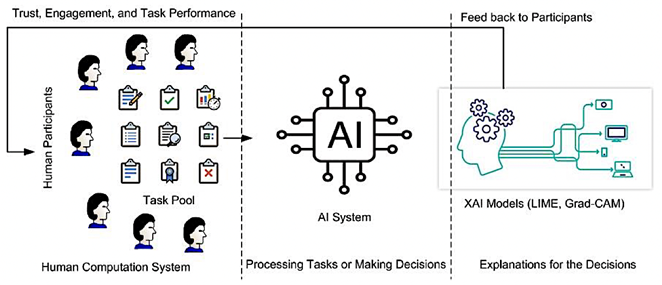

2.2.Explainable AI in Human Computation

Explainable AI and gamification strategies in this framework are supported by the Technology Acceptance Model (TAM), which posits that greater perceived usefulness and transparency directly enhance user trust and adoption [31]. Explainable AI (XAI) enhances trust, engagement, and efficiency in crowdsourced tasks by making AI |

decision processes transparent to human participants. Widely adopted XAI models such as LIME and Grad CAM increase interpretability: LIME decomposes the contribution of individual input features to a decision, while Grad-CAM highlights the most influential image regions within convolutional neural networks (CNNs) [32, 33]. Together, these tools help users understand the rationale behind AI outcomes, thereby fostering accountability and informed participation. In practical crowd work, such as data labeling, analysis, and public health initiatives, XAI enables participants to validate AI-generated outputs and identify potential biases. For example, workers on labeling platforms can use LIME explanations to evaluate and correct model predictions, while clinicians employing Grad-CAM visualizations can pinpoint the regions of medical images that influenced AI diagnoses, thereby improving collaborative diagnostic accuracy and trust [34]. By embedding XAI into case studies across domains, this research assesses its impact on user trust, task performance, and engagement, moving beyond theoretical advantages to demonstrate realworld applicability, see Figure 3. |

|

Figure 3. Hypothetical Model for Explainable AI in Human Computation |

|

2.3. User Modeling and Dynamic Task Allocation

User modeling constructs a computational representation of individual preferences, expertise, and behavior, enabling crowd-work systems (e.g., MTurk, Zooniverse) to allocate tasks dynamically according to each user’s skill set. Techniques such as collaborative filtering, which matches users to tasks based on shared behavior, enhance efficiency; for instance, users who excel in specific labeling categories can be reassigned to similar tasks [35]. Reinforcement learning further refines this process by adjusting assignments in real time based on performance feedback, particularly in gamified settings where the system learns users’ strengths and optimizes task distribution [36]. These modeling techniques enhance personalization and adaptability while reducing task redundancy. Personalized task assignments increase user engagement by aligning tasks with individual capabilities, whereas the dynamic reassignment of complex tasks helps sustain motivation and productivity [37]. Moreover, by avoiding repetitive task allocations, user modeling prevents boredom and disengagement. This study evaluates these effects through real-world case studies on platforms such as MTurk and Zooniverse, demonstrating how collaborative filtering and reinforcement learning improve scalability and effectiveness in human computation [38].

2.4. Gamification and Security Measures

Gamification, the use of game-like elements such as leaderboards, points, and rewards to enhance motivation in non-gaming contexts, has proven effective in human computation platforms. By fostering both intrinsic and extrinsic motivation, gamification increases participant retention and improves task performance [39]. For instance, platforms that award badges and display user rankings frequently report higher levels of engagement and task completion [40]. However, increased engagement alone insufficient; crowdsourced systems must also address inherent security risks. Threats such as data breaches, task manipulation, and fraud can undermine both data integrity and user trust. To mitigate these vulnerabilities, robust security measures, including encryption, authentication protocols, Certificateless Aggregate Signcryption (CLASC), and blockchain-based frameworks, are essential [41]. When integrated effectively, thoughtful gamification and strong security architectures enable crowd platforms to scale efficiently while preserving trust, participation, and data protection. |

2.5. Case Studies

Case Study 1: Amazon Mechanical Turk (MTurk)

Amazon Mechanical Turk (MTurk) is one of the leading microtask crowdsourcing platforms, enabling companies and researchers to assign Human Intelligence Tasks (HITs), or small-scale operations, to a global workforce. These tasks, ranging from data labeling and image classification to survey participation, make MTurk an ideal platform for evaluating Adaptive Task Distribution, User Modeling, and Gamification. Given its large user base and flexible task allocation mechanisms, MTurk offers a practical environment for assessing how adaptive methodologies can enhance task efficiency and user engagement.

· Adaptive Task Distribution: MTurk dynamically assigns tasks based on user experience, skill level, and historical performance, ensuring that simpler tasks are allocated to newer workers while more complex tasks are reserved for experienced ones. This real-time feedback loop optimizes task efficiency and reduces error rates. · User Modeling: By gathering user performance data, such as task completion accuracy and speed, the platform enables the system to tailor task assignments according to each user’s unique strengths. This approach ensures stronger task–worker alignment and fosters a more engaging user experience. · Gamification: Although MTurk lacks built-in gamification features, incorporating elements such as leaderboards, ribbons, and rewards can enhance worker engagement and retention while motivating participants to contributemore frequently.

Case Study 2: Zooniverse

Zooniverse, the world’s largest citizen science platform, enables participants to contribute to a diverse range of research projects by classifying data, analyzing images, and transcribing historical texts. As a crowdsourced human computation platform, Zooniverse depends on public participation, making it an ideal environment for exploringAdaptive Task Distribution, User Modeling, and Gamification to optimize engagement, task efficiency, and scalability.

|

|

· Adaptive Task Distribution: Tasks are allocated based on user productivity and experience. Experienced participants handle more complex analyses, improving task accuracy and overall efficiency, while new volunteers begin with simplerclassifications. · User Modeling: By tracking user behavior and performance, the platform assigns tasks tailored to each participant’s skills. Assigning similar tasks to users who excel in specific projects further enhances engagement and accuracy. · Gamification: Features such as project milestones, progress tracking, and achievement badges motivate volunteers to contribute consistently, fostering a strong sense of community withinthe platform.

Case Study 3: Public Health

In the healthcare sector, AI models are increasingly employed for diagnostic support, predictive modeling, and medical image analysis. Although these models achieve high accuracy in disease detection, their lack of transparency raises concerns about trust and interpretability. Explainable AI (XAI) addresses this challenge by making AI decision processes more understandable, enabling healthcare professionals to validate predictions and collaborate more effectively with AI systems. This case study examines how LIME (Local Interpretable Model-agnostic Explanations) and Grad-CAM (Gradient-weighted Class Activation Mapping) enhance trust in AI-assisted medical image analysis. · LIME: By perturbing input data and analyzing how AI predictions change, LIME generates interpretable explanations of model behavior. In medical imaging, it clarifies why an AI system labels a particular region of an MRI or X-ray as problematic, enabling doctors to understand and validatethe model’s reasoning. · Grad-CAM: This technique generates heatmaps that illustrate the regions of medical images influencing AI predictions. It is particularly valuable for |

identifying diseases such as cancer, as it visually highlights the areas that guided the AI’s decision-making, thereby enhancing interpretability.

3. Results and discussion

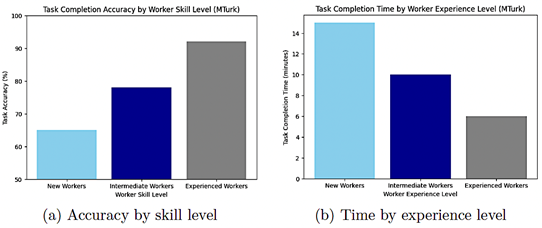

Evaluation follows the TRIPLE C reporting principles, a recognized framework for case study assessments encompassing context, methods, and complexity, to ensure scientific rigor through data triangulation across performance metrics [27, 42]. Each case study establishes clear evaluation objectives and measures outcomes using quantitative indicators such as task accuracy, completion time, error rate, volunteer retention, diagnostic accuracy, and trust scores. This structured, data-driven approach enhances objectivity and grounds the findings in a clearly defined evaluation protocol. Figure 4 illustrates the task completion performance of the MTruk crowdsourcing platform. The adaptive task allocation system on MTurk significantly improves task efficiency by assigning complex tasks to skilled workers, resulting in higher accuracy and faster completion rates. Personalized User Modeling further enhances engagement by tailoring task assignments to individual strengths, reducing redundancy, and preventing skill mismatches. Although Gamification is not yet a core feature of MTurk, existing research suggests that incorporating competitive and reward-based incentives could increase participation and worker retention, particularly in repetitive tasks. This case study demonstrates how adaptive approaches can enhance the scalability, effectiveness, and user engagement of crowdsourced platforms. Dynamic task assignment and personalized user modeling optimize platform outcomes by improving resource utilization. Although gamification elements are not yet fully integrated, the structure of MTurk positions it as a strong candidate for such enhancements. Future research should investigate the incorporation of gamification features and the refinement of user profiling techniques to maximize efficiency and foster sustained participation in large-scale crowd work environments. |

|

Figure 4. Task Completion Performance of MTruk |

|

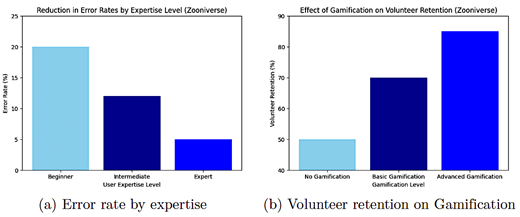

Implementing Adaptive Task Distribution reduces errors by aligning tasks with users’ expertise. User modeling enhances participation by tailoring task assignments to individual strengths, resulting in higher retention and satisfaction. Gamification significantly improves motivation, as volunteers demonstrate greater |

commitment when rewarded with badges and milestone achievements. The visibility of overall project progress further reinforces sustained engagement. Figure 5 illustrates the platform’s performance in terms of error rate by expertise level and the impact of gamification on volunteer retention.

|

|

Figure 5. Adaptive Task Distribution Performance of Zooniverse |

|

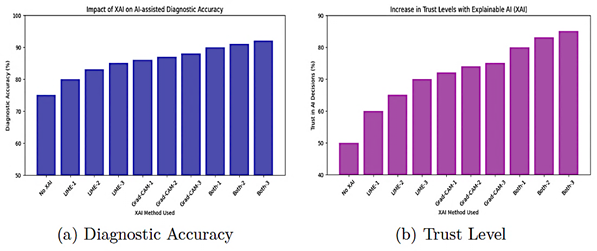

This case study demonstrates how adaptive techniques enhance scalability, engagement, and efficiency in crowdsourced platforms. Personalized task assignments and gamification strategies foster an engaging and sustainable model for volunteer-driven research. Zooniverse serves as a valuable example of how integrating adaptive IUIs into citizen science can enhance user participation and improve task outcomes, with comparable approaches applicable to other large-scale human computation systems. The impact of integrating Explainable AI (XAI) into AI-assisted medical diagnostics is illustrated in Figure 6. Incorporating XAI significantly enhances transparency and trust in AI systems. LIME enables clinicians to validate AI decisions by identifying the image features that influenced classifications, thereby improving diagnostic accuracy. Similarly, Grad-CAM heatmaps provide visual explanations of AI predictions, allowing healthcare professionals to interpret model outputs more effectively and refine their diagnoses. Combining both methods further |

enhances the performance of clinical decision support systems (CDSS) in terms of diagnostic accuracy and trust. Analyses indicate that the inclusion of XAI techniques enhances collaboration between AI systems and medical experts, leading to more reliable diagnoses and greater confidence in AI-driven assessments. This case study highlights the critical role of Explainable AI (XAI) in healthcare, where decisionmaking transparency is essential. The integration of LIME and Grad-CAM into medical diagnostics bridges the gap between AI-generated predictions and human interpretability, ensuring that AI-driven decisions remain verifiable and trustworthy. Future research should focus on optimizing XAI for clinical applications to improve usability and strengthen collaboration between AI systems and healthcare professionals. These findings suggest that XAI should be regarded as a fundamental component of AI-assisted diagnostic tools, particularly in high-stakes domains such as healthcare, where accuracy and explainability are paramount. |

|

Figure 6. Impact of XAI Integration in AI-assisted medical diagnostics |

|

Collectively, these case studies demonstrate that although adaptive approaches are generally effective in crowdsourced environments, their success depends on contextual factors. Gamification and adaptive task allocation substantially enhance user engagement and task performance on platforms such as MTurk and Zooniverse. Explainable AI, in contrast, is crucial for ensuring that AI systems are not only effective but also transparent and reliable in specialized domains such as healthcare. This hybrid approach underscores the versatility and significance of these techniques in optimizing human computation systems, offering both domain-specific recommendations for contexts requiring higher levels of scrutiny and trust, as well as generalizable insights for large-scale platforms.

3.1.Impact of IUI-Driven Approaches Compared to Non-IUI Systems

· Scalability and Flexibility: The IUI-driven systems implemented in MTurk and Zooniverse are not only more scalable than traditional models but also more flexible, enabling them to adapt to the increasing complexity and scale of crowdsourced tasks. This adaptability is largely |

absent in static, non-IUI systems, which struggle to manage large-scale or rapidly evolving workloads effectively. · Enhanced Trust and Collaboration: The integration of XAI into IUI-driven systems, particularly in the public health case, demonstrates how these systems foster stronger collaboration between humans and AI. This represents a substantial improvement over traditional AI models, in which limited transparency often undermines user trust and hinders the acceptance of AI-driven processes.

By explicitly comparing adaptive IUI methodologies with non-IUI-driven approaches, it becomes evident that the former offers substantial improvements in efficiency, scalability, engagement, and trust. The adaptive task distribution, user modeling, and gamification strategies employed in platforms such as MTurk and Zooniverse address the limitations of static task allocation and impersonal systems, while XAI introduces the transparency and collaboration often lacking in traditional AI models. This comparison underscores that IUI-driven systems provide a more robust, scalable, and trustworthy framework for optimizing human computation environments. |

|

3.2. Limitations

Despite its contributions, the proposed Adaptive IUI framework presents several limitations:

1. The effectiveness of dynamic task allocation and personalization relies heavily on accurate and up-to-date user models. Current research highlights the challenge of capturing complex, multidimensional user attributes, such as emotion, context, and behavior, while preserving model consistency and ensuring user privacy. 2. Integrating multiple adaptive modules, including task allocation, gamification, explainability, and security, can impose significant computational and implementation overhead. Prior studies on adaptive UI frameworks have identified system performance, resource consumption, and maintainability as persistent challenges. 3. Although gamification can enhance engagement, it is prone to the novelty effect, an initial surge in user interest followed by a gradual decline. Furthermore, excessive reliance on extrinsic rewards may lead to the over justification effect, which undermines intrinsic motivation. These phenomena underscore the need for a balanced, long-term gamification strategy. 4. Adaptive IUIs collect and analyze sensitive data, including user behavior and channel state information (CSI), which raises significant privacy, security, and ethical challenges. Ensuring robust data protection and maintaining transparency are essential to preserving user trust.

4. Conclusions

This benchmarking study demonstrates the significance of combining adaptive approaches, such as Adaptive Task Distribution, User Modeling, Gamification, and Explainable AI (XAI), to enhance human computation systems. By employing a hybrid strategy that integrates two general-purpose case studies (MTurk and Zooniverse) with a specialized case study in public health using XAI, the research provides comprehensive insights into the adaptability, scalability, and reliability of adaptive IUIs. Personalized task assignments and dynamic task allocation significantly improve task performance and engagement on large-scale platforms such as Zooniverse and Amazon Mechanical Turk. Real-time task distribution based on user competence ensures that tasks are assigned to the most qualified participants, increasing productivity and reducing error rates. Furthermore, incorporating gamification elements enhances user motivation and long-term engagement, which is crucial for maintaining sustained participation in |

crowdsourced platforms. The public health case study highlights the critical role of transparency and trust in high-stakes environments. Integrating XAI models such as LIME and Grad-CAM enhances the interpretability of AI-driven diagnostics, enabling healthcare practitioners to understand and evaluate AI-generated results. This transparency fosters collaboration and strengthens confidence in AI systems, ultimately contributing to improved patient outcomes. In conclusion, this study demonstrates that adaptive IUI systems are scalable and customizable across both general crowdsourced platforms and specialized domains such as healthcare. Collectively, these approaches provide a flexible, transparent, and reliable foundation for advancing human computation systems. Their integration not only enhances scalability and engagement but also strengthens trust, accuracy, and accountability in complex, high-impact applications.

Contributor Roles

· R.J. Martin: Conceptualization, investigation, methodology, validation, writing – original draft, writing – review & editing.

References

[1] L. von Ahn and L. Dabbish, “Designing games with a purpose,” Communications of the ACM, vol. 51, no. 8, pp. 58–67, Aug. 2008. [Online]. Available: https://doi.org/10.1145/1378704.1378719 [2] E. Horvitz, “Principles of mixed-initiative user interfaces,” in Proceedings of the SIGCHI conference on Human factors in computing systems the CHI is the limit - CHI ’99, ser. CHI ’99. ACM Press, 1999, pp. 159–166. [Online]. Available: https://doi.org/10.1145/302979.303030 [3] A. J. Quinn and B. B. Bederson, “Human computation: a survey and taxonomy of a growing field,” in Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, ser. CHI ’11. ACM, May 2011, pp. 1403–1412. [Online]. Available: https://doi.org/10.1145/1978942.1979148 [4] C. Schmidbauer, S. Zafari, B. Hader, and S. Schlund, “An empirical study on workers’ preference in human–robot task assignment in industrial assembly systems,” IEEE Transactions on Human-Machine Systems, vol. 53, no. 2, pp. 293–302, 2023. [Online]. Available: https://doi.org/10.1109/thms.2022.3230667 [5] J. Wen, J. Yang, T. Wang, Y. Li, and Z. Lv, “Energy-efficient task allocation for reliable parallel computation of cluster-based wireless sensor network in edge computing,” Digital Communications and Networks, vol. 9, no. 2, pp. 473–482, Apr. 2023. [Online]. Available: https://doi.org/10.1016/j.dcan.2022.06.014 |

|

[6] M. Faccio, I. Granata, and R. Minto, “Task allocation model for human-robot collaboration with variable cobot speed,” Journal of Intelligent Manufacturing, vol. 35, no. 2, pp. 793–806, Jan. 2023. [Online]. Available: https://doi.org/10.1007/s10845-023-02073-9 [7] Z. Yuan, R. Wang, T. Kim, D. Zhao, I. Obi, and B.-C. Min, “Adaptive task allocation in multi-human multi-robot teams under team heterogeneity and dynamic information uncertainty,” ICRA 2025, 2024. [Online]. Available: https://doi.org/10.48550/arXiv.2409.13824 [8] A. Tamali, N. Amardjia, and M. Tamali, “Distributed and autonomous multi-robot for task allocation and collaboration using a greedy algorithm and robot operating system platform,” IAES International Journal of Robotics and Automation (IJRA), vol. 13, no. 2, p. 205, Jun. 2024. [Online]. Available: https://doi.org/10.11591/ijra.v13i2.pp205-219 [9] M. Jain, Crowd-Sourced Evaluation of Explainable AI Techniques with Games. Carnegie Mellon University, 2021. [Online]. Available: https://upsalesiana.ec/ing35ar4r9 [10] Z. Kou, Y. Zhang, D. Zhang, and D. Wang, “Crowdgraph: A crowdsourcing multi-modal knowledge graph approach to explainable fauxtography detection,” Proceedings of the ACM on Human-Computer Interaction, vol. 6, no. CSCW2, pp. 1–28, Nov. 2022. [Online]. Available: https://doi.org/10.1145/3555178 [11] Z. Kou, L. Shang, Y. Zhang, and D. Wang, “Hc-covid: A hierarchical crowdsource knowledge graph approach to explainable covid-19 misinformation detection,” Proceedings of the ACM on Human-Computer Interaction, vol. 6, no. GROUP, pp. 1–25, Jan. 2022. [Online]. Available: http://doi.org/10.1145/3492855 [12] M. Sawant, A. Younus, S. Caton, and M. A. Qureshi, “Using explainable ai (xai) for identification of subjectivity in hate speech annotations for low-resource languages,” in 4th International Workshop on OPEN CHALLENGES IN ONLINE SOCIAL NETWORKS, ser. HT ’24. ACM, Sep. 2024, pp. 10–17. [Online]. Available: http://doi.org/10.1145/3677117.3685006

|

[13] V. Lai, Y. Zhang, C. Chen, Q. V. Liao, and C. Tan, “Selective explanations: Leveraging human input to align explainable ai,” Proceedings of the ACM on Human-Computer Interaction, vol. 7, no. CSCW2, pp. 1–35, Sep. 2023. [Online]. Available: http://doi.org/10.1145/3610206 [14] C. Zhang, P. van Gorp, M. Derksen, R. Nuijten, W. A. IJsselsteijn, A. Zanutto, F. Melillo, and R. Pratola, “Promoting occupational health through gamification and e-coaching: A 5-month user engagement study,” International Journal of Environmental Research and Public Health, vol. 18, no. 6, p. 2823, Mar. 2021. [Online]. Available: http://doi.org/10.3390/ijerph18062823 [15] C. J. Hellín, F. Calles-Esteban, A. Valledor, J. Gómez, S. Otón-Tortosa, and A. Tayebi, “Enhancing student motivation and engagement through a gamified learning environment,” Sustainability, vol. 15, no. 19, p. 14119, Sep. 2023. [Online]. Available: http://doi.org/10.3390/su151914119 [16] H.-P. Lu and H.-C. Ho, “Exploring the impact of gamification on users’ engagement for sustainable development: A case study in brand applications,” Sustainability, vol. 12, no. 10, p. 4169, May 2020. [Online]. Available: http://doi.org/10.3390/su12104169 [17] P. Bitrián, I. Buil, and S. Catalán, “Enhancing user engagement: The role of gamification in mobile apps,” Journal of Business Research, vol. 132, pp. 170–185, Aug. 2021. [Online]. Available: http://doi.org/10.1016/j.jbusres.2021.04.028 [18] A. S. Alfaqiri, S. F. M. Noor, and N. Sahari, “Framework for gamification of online training platforms for employee engagement enhancement,” International Journal of Interactive Mobile Technologies (iJIM), vol. 16, no. 06, pp. 159–175, Mar. 2022. [Online]. Available: http://doi.org/10.3991/ijim.v16i06.28485 [19] N. Pius Owoh and M. Mahinderjit Singh, “Sensecrypt: A security framework for mobile crowd sensing applications,” Sensors, vol. 20, no. 11, p. 3280, Jun. 2020. [Online]. Available: http://doi.org/10.3390/s20113280

|

|

[20] Z. Li, J. Liu, J. Hao, H. Wang, and M. Xian, “Crowdsfl: A secure crowd computing framework based on blockchain and federated learning,” Electronics, vol. 9, no. 5, p. 773, May 2020. [Online]. Available: http://doi.org/10.3390/electronics9050773 [21] T. Abbas, Affective Real-Time Crowd-Powered Conversational Systems. Eindhoven University of Technology, Sep. 2022, proefschrift. [Online]. Available: https://upsalesiana.ec/ing35ar4r22 [22] P. Siangliulue, J. Chan, S. P. Dow, and K. Z. Gajos, “Ideahound: Improving large-scale collaborative ideation with crowd-powered realtime semantic modeling,” in Proceedings of the 29th Annual Symposium on User Interface Software and Technology, ser. UIST ’16. ACM, Oct. 2016, pp. 609–624. [Online]. Available: http://doi.org/10.1145/2984511.2984578 [23] M. Ponti and A. Seredko, “Human-machinelearning integration and task allocation in citizen science,” Humanities and Social Sciences Communications, vol. 9, no. 1, Feb. 2022. [Online]. Available http://doi.org/10.1057/s41599-022-01049-z [24] S. Stein and V. Yazdanpanah, “Citizen-centric multiagent systems,” in Proceedings of the 2023 International Conference on Autonomous Agents and Multiagent Systems, ser. AAMAS ’23. Richland, SC: International Foundation for Autonomous Agents and Multiagent Systems, 2023, p. 1802–1807. [Online]. Available: https://upsalesiana.ec/ing35ar4r24 [25] P. Gupta, T. N. Nguyen, C. Gonzalez, and A. W. Woolley, “Fostering collective intelligence in human–ai collaboration: Laying the groundwork for cohumain,” Topics in Cognitive Science, vol. 17, no. 2, pp. 189–216, Jun. 2023. [Online]. Available: http://doi.org/10.1111/tops.12679 [26] A. Carrera-Rivera, F. Larrinaga, G. Lasa, G. Martinez-Arellano, and G. Unamuno, “Adaptui: A framework for the development of adaptive user interfaces in smart productservice systems,” User Modeling and User-Adapted Interaction, vol. 34, no. 5, pp. 1929–1980, Aug. 2024. [Online]. Available: http://doi.org/10.1007/s11257-024-09414-0 27] S. E. Shaw, S. Paparini, J. Murdoch, J. Green, T. Greenhalgh, B. Hanckel, H. M. James, M. Petticrew, G. W. Wood, and C. Papoutsi, “Triple c reporting principles for case study evaluations of the role of context in complex interventions,” BMC Medical Research Methodology, vol. 23, no. 1, May 2023. [Online]. Available: http://doi.org/10.1186/s12874-023-01888-7 [28] L. Uden and N. Willis, “Designing user interfaces using activity theory,” in Proceedings of the 34th Annual Hawaii International Conference on System Sciences, ser. HICSS-01. IEEE Comput. Soc, 2005, p. 11. [Online]. Available: http://doi.org/10.1109/hicss.2001.926547 |

[29] M. Calzavara, M. Faccio, I. Granata, and A. Trevisani, “Achieving productivity and operator well-being: a dynamic task allocation strategy for collaborative assembly systems in industry 5.0,” The International Journal of Advanced Manufacturing Technology, Aug. 2024. [Online]. Available: http://doi.org/10.1007/s00170-024-14302-3 [30] M. H. Faisal, A. W. AlAmeeri, and A. A. Alsumait, “An adaptive e-learning framework: crowdsourcing approach,” in Proceedings of the 17th International Conference on Information Integration and Web-based Applications & Services, ser. iiWAS ’15. ACM, Dec. 2015, pp. 1–5. [Online]. Available: http://doi.org/10.1145/2837185.2837249 [31] J. C. Cheung and S. S. Ho, “The effectiveness of explainable ai on human factors in trust models,” Scientific Reports, vol. 15, no. 1, Jul. 2025. [Online]. Available: http://doi.org/10.1038/s41598-025-04189-9 [32] M. T. Ribeiro, S. Singh, and C. Guestrin, ““why should i trust you?”: Explaining the predictions of any classifier,” in Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, ser. KDD ’16. ACM, Aug. 2016, pp. 1135–1144. [Online]. Available: http://doi.org/10.1145/2939672.2939778 [33] R. R. Selvaraju, M. Cogswell, A. Das, R. Vedantam, D. Parikh, and D. Batra, “Grad-cam: Visual explanations from deep networks via gradientbased localization,” in 2017 IEEE International Conference on Computer Vision (ICCV). IEEE, Oct. 2017, pp. 618–626. [Online]. Available: http://doi.org/10.1109/iccv.2017.74 [34] K. Borys, Y. A. Schmitt, M. Nauta, C. Seifert, N. Krämer, C. M. Friedrich, and F. Nensa, “Explainable ai in medical imaging: An overview for clinical practitioners – saliency-based xai approaches,” European Journal of Radiology, vol. 162, p. 110787, May 2023. [Online]. Available: http://doi.org/10.1016/j.ejrad.2023.110787 [35] Q.Wang, Y.Wan, F. Feng, and X.Wang, “Threshol optimization of task allocation models in human machine collaborative scoring of subjectiv assignments,” Computers & Industrial Engineering vol. 188, p. 109923, Feb. 2024. [Online]. Available: http://doi.org/10.1016/j.cie.2024.109923 [36] L. Sun, X. Yu, J. Guo, Y. Yan, and X. Yu, “Deep reinforcement learning for task assignment in spatial crowdsourcing and sensing,” IEEE Sensors Journal, vol. 21, no. 22, pp. 25 323–25 330, Nov. 2021. [Online]. Available: http://doi.org/10.1109/jsen.2021.3057376 [37] S. N. Ahmadabadi, M. Haghifam, V. Shah-Mansouri, and S. Ershadmanesh, “Design and evaluation of crowdsourcing platforms based on users’ confidence judgments,” Scientific Reports, vol. 14, no. 1, Aug. 2024. [Online]. Available: http://doi.org/10.1038/s41598-024-65892-7 |

|

[38] J. Cox, E. Y. Oh, B. Simmons, C. Lintott, K. Masters, A. Greenhill, G. Graham, and K. Holmes, “Defining and measuring success in online citizen science: A case study of zooniverse projects,” Computing in Science ∓ Engineering, vol. 17, no. 4, pp. 28–41, Jul. 2015. [Online]. Available: http://doi.org/10.1109/mcse.2015.65 [39] S. A. Triantafyllou, T. Sapounidis, and Y. Farhaoui, “Gamification and computational thinking in education: A systematic literature review,” Salud, Ciencia y Tecnología - Serie de Conferencias, vol. 3, p. 659, Mar. 2024. [Online]. Available: http://doi.org/10.56294/sctconf2024659 [40] H. Cigdem, M. Ozturk, Y. Karabacak, N. Atik, S. Gürkan, and M. H. Aldemir, “Unlocking student engagement and achievement: The impact of leaderboard gamification in online formative assessment for engineering education,” Education and Information Technologies, vol. 29, no. 18, pp. 24 835–24 860, Jun. 2024. [Online]. Available: http://doi.org/10.1007/s10639-024-12845-2 |

[41] A. Tomar and S. Tripathi, “Bcsom: Blockchain-based certificateless aggregate signcryption scheme for internet of medical things,” Computer Communications, vol. 212, pp. 48–62, Dec. 2023. [Online]. Available: http://doi.org/10.1016/j.comcom.2023.09.027 [42] P. Runeson and M. Höst, “Guidelines for conducting and reporting case study research in software engineering,” Empirical Software Engineering, vol. 14, no. 2, pp. 131–164, Dec. 2008. [Online]. Available: http://doi.org/10.1007/s10664-008-9102-8

|